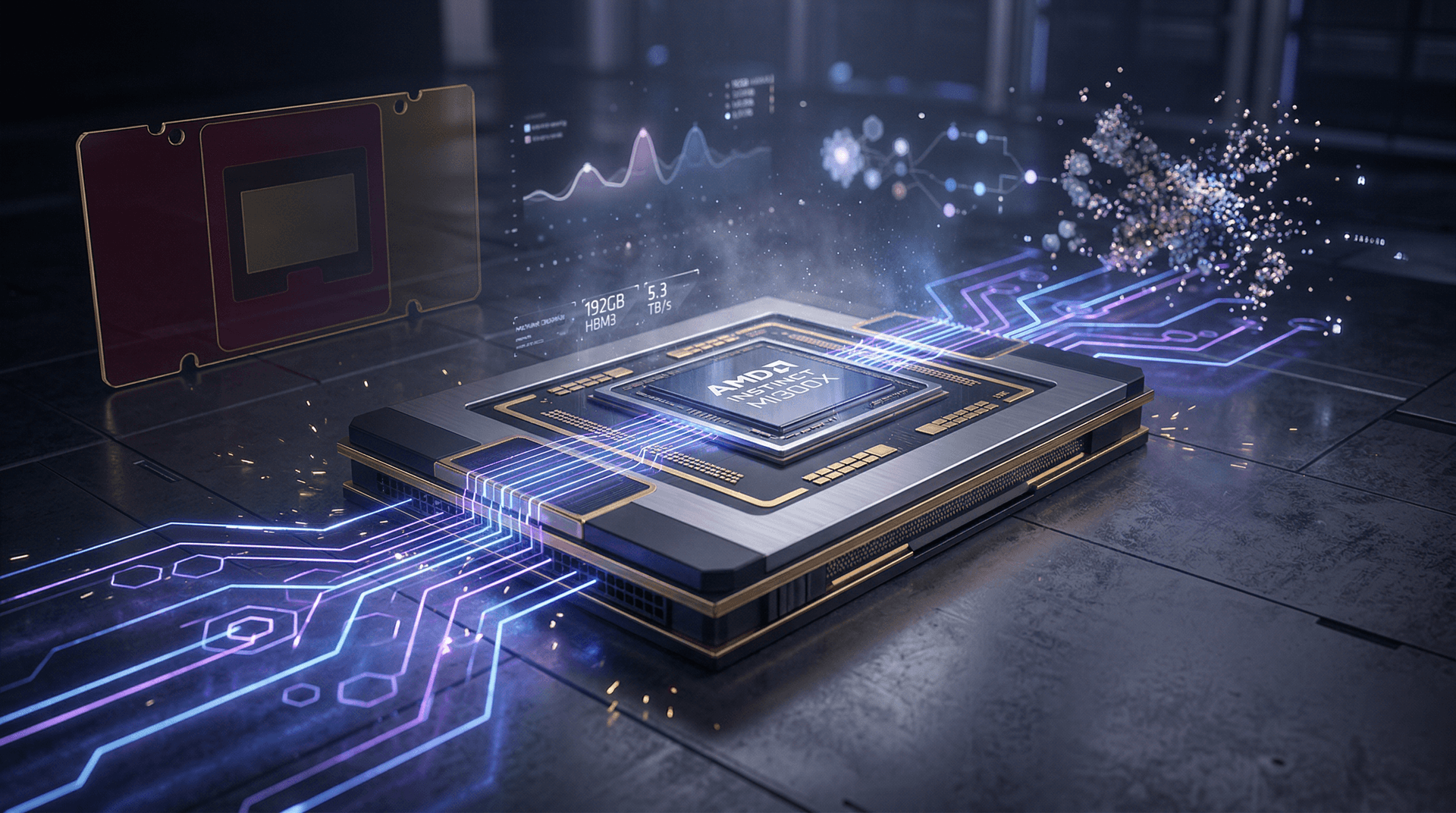

Santa Clara, CA – June 6, 2023 – In a bold move to capture a larger slice of the exploding AI market, AMD announced the Instinct MI300X accelerator at its Advancing AI 2023 event. CEO Lisa Su positioned the chip as the "world's most powerful" for AI workloads, directly challenging Nvidia's dominance with unprecedented memory capacity and performance metrics.

The MI300X is built on AMD's CDNA 3 architecture, optimized for high-performance computing (HPC) and AI training/inference. It packs 192GB of HBM3 memory – the highest capacity ever in a single GPU – with a staggering 5.3 TB/s bandwidth. This is a significant leap from competitors, enabling the handling of massive models like large language models (LLMs) without the fragmentation issues plaguing lower-memory alternatives.

Key Specifications and Benchmarks

According to AMD's disclosures, the MI300X delivers up to 2.2x better inference performance than Nvidia's H100 in key AI benchmarks, such as Llama 2 70B. For training, it offers 1.6x to 1.8x gains on models like GPT-3 175B. These figures stem from the chip's 304 compute units, third-generation Infinity Fabric interconnect, and support for FP8 precision, which accelerates generative AI tasks.

| Feature | AMD MI300X | Nvidia H100 SXM | |----------------------|---------------------|---------------------| | Memory | 192GB HBM3 | 80GB HBM3 | | Memory Bandwidth | 5.3 TB/s | 3.35 TB/s | | Compute Units | 304 | 132 (SMs) | | FP16 Performance | 2.6 PFLOPS | 1.98 PFLOPS | | Inference (Llama2) | 2.2x H100 | Baseline |

The chip's design addresses a critical pain point in AI: memory bottlenecks. As models grow – think GPT-4 scale with trillions of parameters – data center operators need accelerators that can load entire models into memory. MI300X's capacity allows this, reducing latency and boosting throughput for inference-heavy applications like chatbots and image generators.

AMD's Strategic Push into AI

AMD has been ramping up its AI portfolio since the MI250 series in 2021, but the MI300 family marks a pivotal escalation. Lisa Su emphasized during the keynote: "The MI300X redefines what's possible in AI acceleration. With the surge in generative AI, customers need scalable, efficient solutions – and we're delivering them at the right price-performance ratio."

This launch coincides with hyperscalers like Microsoft, Google, and Meta expanding AI infrastructure. AMD already powers supercomputers like Frontier (world's fastest as of June 2023) and has partnerships with Oracle and HPE. Early adopters for MI300X include major cloud providers, with general availability slated for Q4 2023.

The broader MI300 series includes the MI300A for HPC-AI convergence (APU with CPU+GPU) and MI300X for pure GPU acceleration. Together, they promise up to 4x performance over prior generations on ROCm-optimized software stacks.

Nvidia's Shadow and Market Dynamics

Nvidia holds over 80% of the AI accelerator market, fueled by its Hopper architecture (H100) and CUDA ecosystem. However, supply shortages and soaring prices – H100s fetch $30,000+ each – have opened doors for rivals. AMD's ROCm platform, while improving, lags in software maturity, but recent updates support PyTorch and TensorFlow natively.

Intel's Gaudi 2 and upcoming Falcon Shores, plus startups like Cerebras and Graphcore, add to the fray. Yet, AMD's vertical integration – from EPYC CPUs to Instinct GPUs – positions it for full-stack AI systems. Su noted: "We're not just competing on silicon; we're building an open ecosystem."

The AI boom, ignited by ChatGPT's November 2022 debut, has driven data center spending projections to $100B+ annually by 2025. Goldman Sachs estimates generative AI hardware demand could reach $200B by 2027. AMD aims to claim 20-30% share, leveraging cost advantages (MI300X expected under $20,000).

Implications for Machine Learning Workloads

For ML engineers, MI300X means faster iteration on foundation models. Its Sparse Matrix Support and matrix core accelerators excel in transformer-based architectures dominating NLP and vision tasks. Benchmarks show 30% better energy efficiency than H100, crucial for sustainable AI amid power constraints.

In inference, where most real-world value lies (e.g., Bing Chat, Stable Diffusion services), the memory edge shines. A single MI300X can serve 70B-parameter models at scale, versus multi-GPU setups on H100.

Challenges Ahead

Adoption hinges on ROCm parity with CUDA. AMD's $400M+ R&D investment in software aims to close the gap, with ROCm 5.0 supporting MI300 out-of-box. Ecosystem momentum is building: Hugging Face, PyTorch Lightning integrations incoming.

Geopolitical tensions, like U.S. chip export curbs to China, benefit U.S.-based AMD. However, Nvidia's A100/H100 install base creates lock-in.

Looking Forward

The MI300X isn't a Nvidia killer overnight, but it's a credible threat. As AI democratizes – from startups fine-tuning Llama to enterprises deploying custom models – choice matters. AMD's entry could lower barriers, spurring innovation.

Expect system announcements soon: Supermicro, Dell integrating MI300X into racks. By 2024, MI350 with HBM3e will push further.

In the AI arms race, AMD just fired a powerful shot. Watch this space as benchmarks from real users roll in.

CSN News covers the intersection of tech and finance. Follow for updates on AI hardware wars.

(Word count: 912)