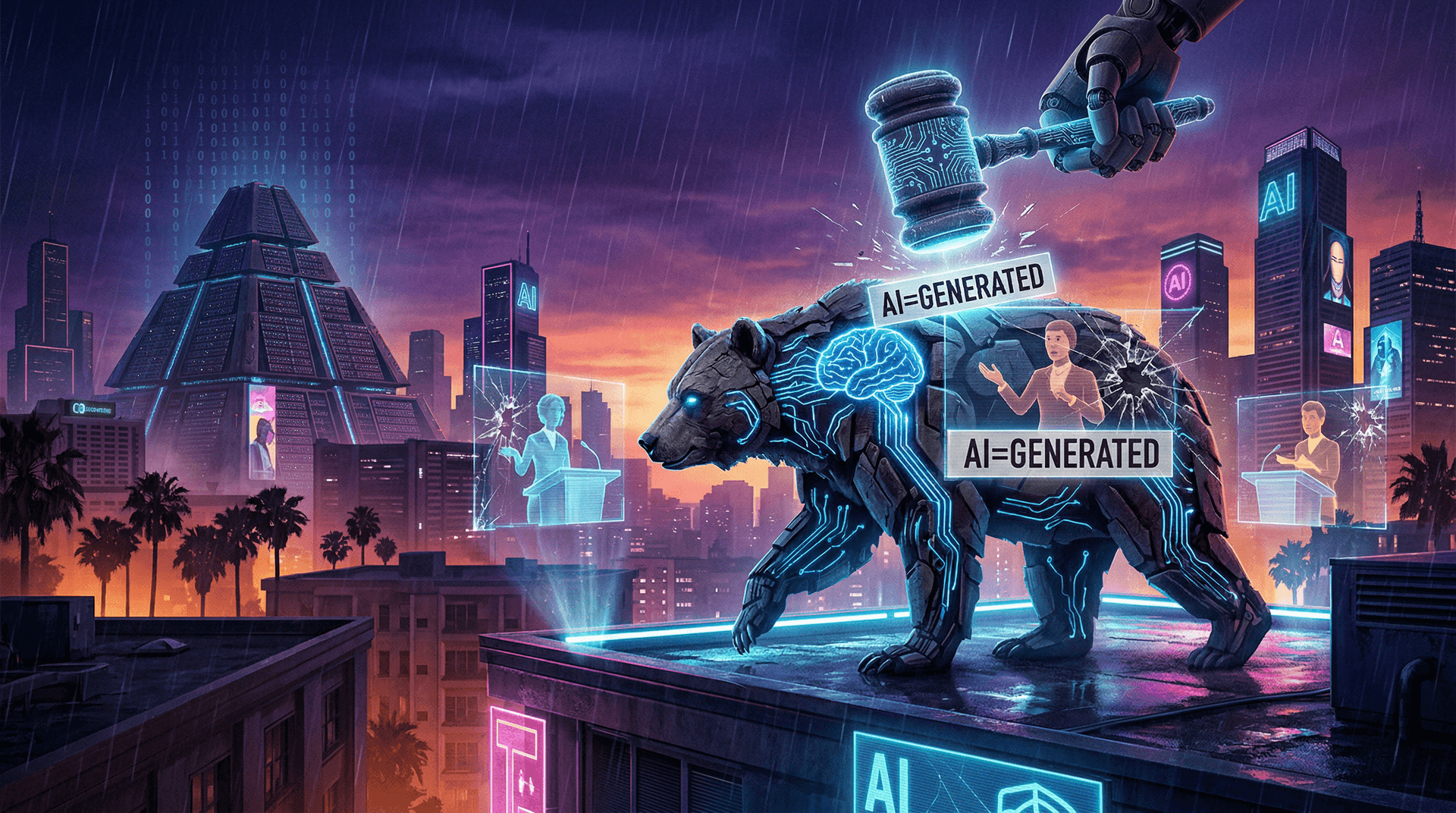

As the clock struck midnight on January 1, 2024, California didn't just celebrate the new year—it launched a regulatory salvo against the misuse of artificial intelligence. Three new laws, primarily aimed at combating deepfakes in elections and imposing transparency requirements on generative AI, took effect, positioning the Golden State as a leader in AI governance. These measures come at a time when machine learning technologies like diffusion models powering tools such as Stable Diffusion and DALL-E have made hyper-realistic fake media easier to produce than ever.

The Laws at a Glance

The trio of bills—AB 730, AB 2839, and AB 3073—address specific risks posed by advanced AI systems.

- AB 730 (Deepfake Election Media Ban): This law prohibits the distribution or making of audio or video deepfakes depicting political candidates within 120 days of an election, unless the content is clearly labeled as artificially generated. Violators face misdemeanor charges, fines up to $1,000, or up to one year in jail. The intent is to protect democratic processes from AI-fueled disinformation, a concern amplified during the 2020 U.S. elections.

- AB 2839 (Platform Disclosure Requirements): Large online platforms with at least 1 million California users must clearly and conspicuously disclose when election-related communications are AI-generated. This targets social media giants, ensuring users aren't deceived by ML-generated content masquerading as authentic.

- AB 3073 (Watermarking for Gen AI): Developers of generative AI models that produce realistic depictions of individuals must embed detectable watermarks or metadata in outputs. This applies to images, videos, and audio, making it easier to identify synthetic media downstream.

These laws build on California's tech-savvy ecosystem, home to Silicon Valley giants like Google, Meta, and OpenAI partners, who have poured billions into machine learning research.

The Rise of Deepfakes: A Machine Learning Menace

Deepfakes rely on sophisticated neural networks, particularly Generative Adversarial Networks (GANs) and more recent diffusion models. Since the 2017 debut of the first face-swapping deepfake video, the technology has evolved rapidly. Tools like DeepFaceLab and commercial platforms have democratized creation, with over 95% of deepfakes being non-consensual pornography, per a 2023 Sensity AI report. But political misuse is rising: In 2023, fake videos of President Biden and other leaders circulated widely.

Machine learning's role is pivotal. Training data from vast datasets like LAION-5B enables models to generate convincing fakes. California's laws force a reckoning, requiring AI firms to integrate safeguards into their ML pipelines—potentially via adversarial training or digital signatures.

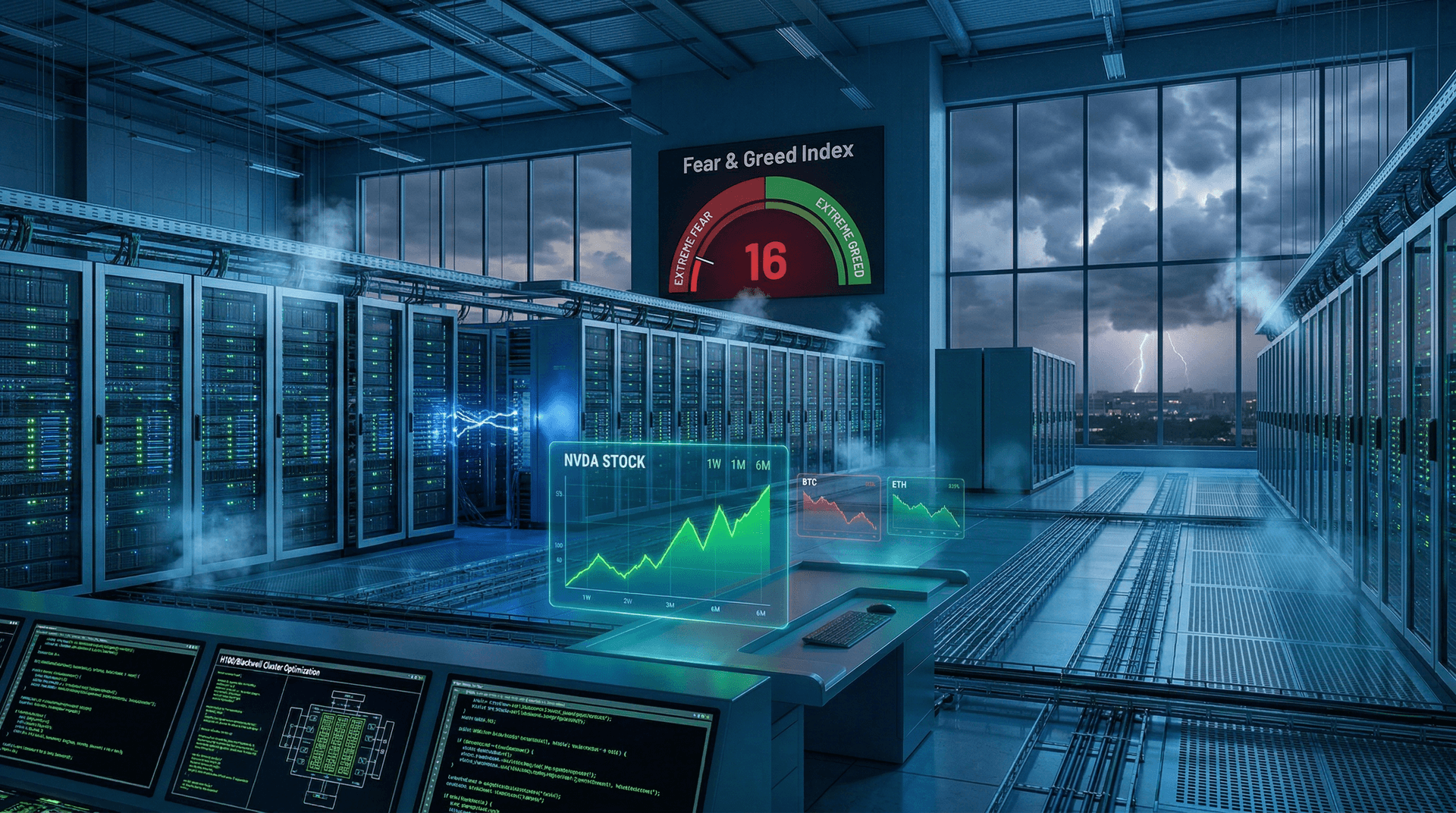

Implications for AI Developers and Industry

For machine learning engineers, compliance means rethinking model deployment. Watermarking, for instance, could involve techniques like invisible perturbations or blockchain-based provenance tracking, as explored in recent NeurIPS papers. Companies like Adobe have pioneered Content Credentials, a standard endorsed by the Coalition for Content Provenance and Authenticity (C2PA), which aligns with AB 3073.

Silicon Valley heavyweights are responding. Meta, which released Llama 2 in 2023, has emphasized safety classifiers in its models. OpenAI's DALL-E 3 includes built-in safeguards against harmful generations. However, critics argue these state laws could stifle innovation, fragmenting the U.S. market if other states follow suit.

Smaller AI startups face steeper challenges. Embedding watermarks increases computational overhead—potentially 10-20% more inference time, per industry estimates—raising costs for cloud-based ML services.

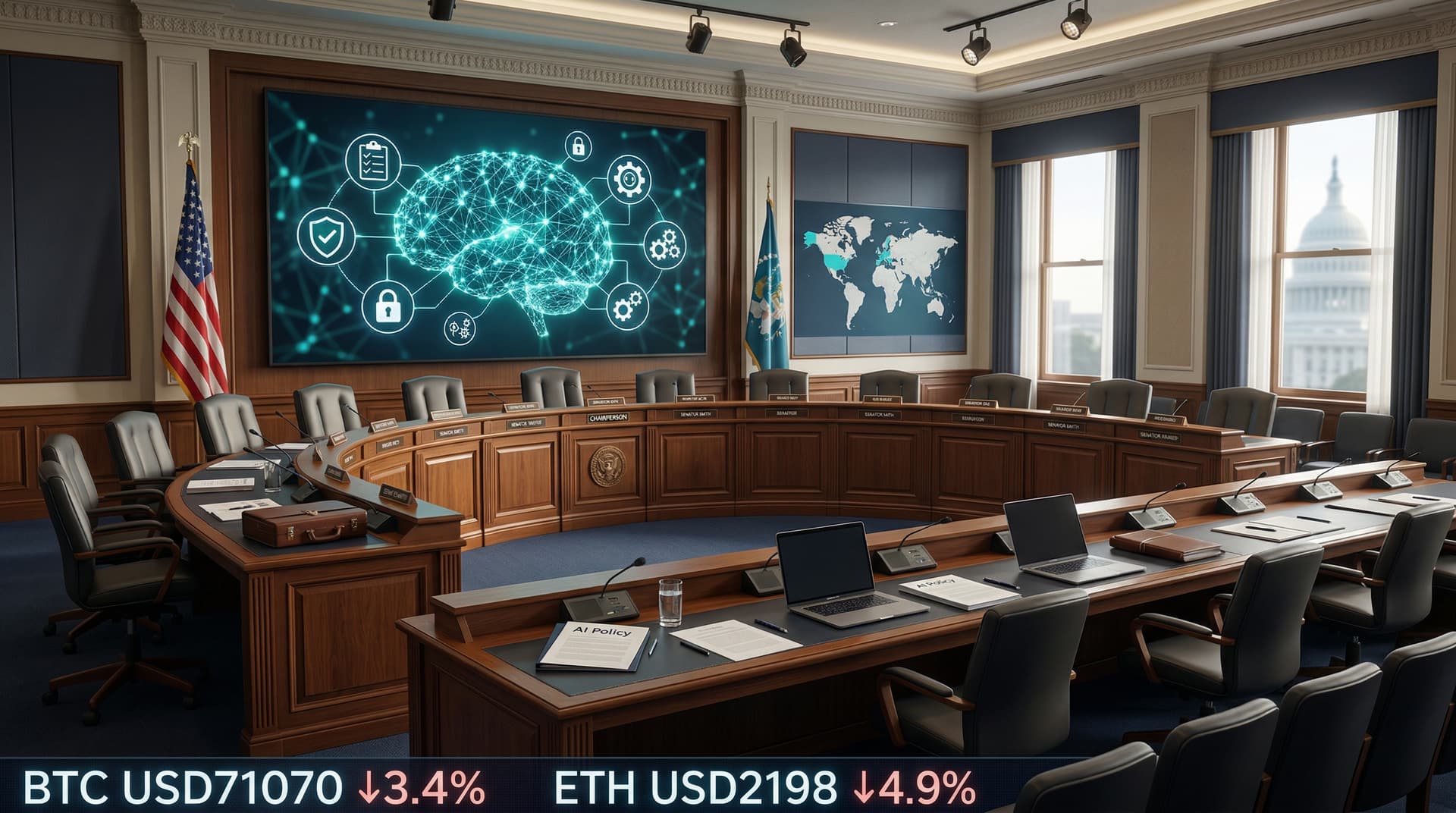

Broader Context: U.S. vs. Global AI Regulation

While California acts, federal efforts lag. The White House's 2023 Executive Order on AI calls for safety testing but lacks enforcement teeth. Bipartisan bills like the DEEP FAKES Accountability Act have stalled in Congress.

Contrast this with the European Union's AI Act, finalized in December 2023, which categorizes AI by risk levels and bans real-time biometric deepfakes. California's approach is narrower but faster, influencing national policy as it did with GDPR-inspired privacy laws.

Internationally, China's generative AI rules (effective 2023) mandate content approval, while the UK's AI Safety Summit in November 2023 highlighted global coordination needs.

Expert Voices and Industry Reactions

Yann LeCun, Meta's Chief AI Scientist, tweeted in late 2023: "Regulation is needed, but it must be evidence-based to avoid killing beneficial AI." AI ethicist Timnit Gebru praised the laws as "a vital first step against disinformation."

The AI Alliance, comprising IBM, Meta, and others, welcomed transparency mandates, pledging tools for compliance.

Potential pitfalls remain. Enforcement relies on California's Attorney General; detection tech isn't foolproof, as adversaries can strip watermarks using ML-based attacks.

Looking Ahead: 2024 and Beyond

These laws set a precedent for 2024, with elections looming. Expect copycat legislation in New York and Texas. For machine learning, 2024 could see standardized watermarking protocols emerge, boosting trust in AI outputs.

As AI integrates deeper into daily life—from chatbots to autonomous vehicles—California's move underscores a key tension: harnessing ML's power while mitigating risks. With tools like GPT-4 and Stable Diffusion XL pushing boundaries, proactive governance is no longer optional.

In the epicenter of innovation, California is drawing a line in the silicon sand. Whether this sparks a regulatory renaissance or innovation chill remains to be seen.

CSN News, January 4, 2024

(Word count: 912)