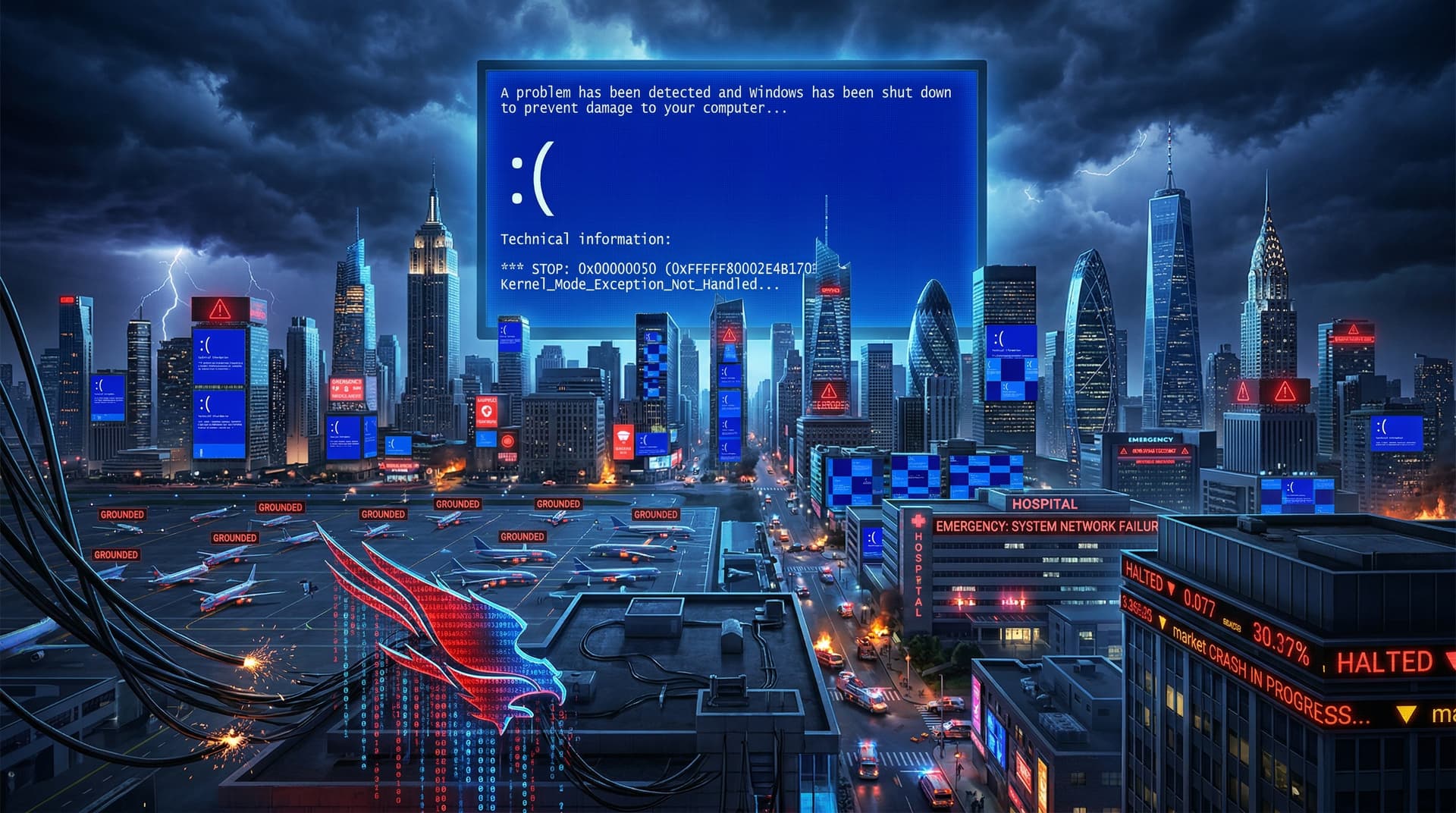

On July 19, 2024, the world witnessed one of the most disruptive IT incidents in recent history. A routine content update pushed by cybersecurity giant CrowdStrike to its Falcon Sensor software caused millions of Windows machines to enter a boot-loop state, displaying the infamous Blue Screen of Death (BSOD). Airlines grounded flights, hospitals canceled procedures, stock exchanges halted trading, and countless businesses ground to a halt. This review dissects the event's timeline, root causes, far-reaching impacts, corporate responses, and critical lessons for the tech ecosystem.

Timeline of the Meltdown

The outage unfolded rapidly. At approximately 4:09 UTC, CrowdStrike deployed Channel File 291, a configuration update for its Falcon endpoint detection platform used by over 300 Falcon Complete customers and millions of endpoints globally. Within minutes, affected Windows systems crashed en masse.

- 13:00 UTC: Initial reports from U.S. East Coast users of BSODs.

- 14:00 UTC: Delta Air Lines grounds all flights; United and American Airlines report delays.

- 16:00 UTC: Microsoft confirms the issue stems from a CrowdStrike driver incompatibility.

- 18:00 UTC: Global media frenzy; emergency services in the UK and Australia disrupted.

By evening, the Falcon Sensor displayed error code 0x00000050 (PAGE_FAULT_IN_NONPAGED_AREA), rendering systems unbootable without manual intervention. Recovery required booting into Safe Mode, deleting the rogue file from C:\Windows\System32\drivers\CrowdStrike\C-00000291.sys, and rebooting— a process that could take hours per machine.

Root Causes: A Perfect Storm of Errors

CrowdStrike's Falcon platform is a leader in endpoint detection and response (EDR), protecting against advanced threats. However, this incident wasn't a cyberattack but a self-inflicted wound from a validation failure.

According to CrowdStrike's preliminary post-incident report on July 20, the update contained a logic error in the content validator. The file, intended to enhance threat detection via new rules, used 64-bit integers incorrectly parsed by 32-bit processes in Windows. This led to out-of-bounds memory reads, triggering kernel panics.

Key failures:

- Bypass of Quality Gates: The update skipped rigorous testing in the pre-deployment environment.

- Lack of Rollback Mechanism: No automated way to revert the defective channel file.

- Platform-Specific Blind Spot: macOS and Linux systems were unaffected, highlighting Windows kernel driver risks.

Microsoft's July 20 blog post corroborated this, noting the driver (csagent.sys) loaded at ring 0 with full privileges, amplifying the blast radius. The irony? CrowdStrike, valued at $90 billion post-IPO, designed to prevent chaos, became its epicenter.

Devastating Impacts Across Sectors

The outage's scale was unprecedented, rivaling the 2021 Colonial Pipeline hack but without malice. Economic estimates from Paramount on July 22 pegged losses at $5.4 billion, excluding long-term reputational damage.

Aviation: Grounded and Stranded

Delta canceled over 3,500 flights through July 22, stranding 500,000 passengers. Recovery lagged due to legacy systems reliant on on-premise servers. United reported 700+ cancellations, with crew scheduling software offline.

Healthcare: Lives on Hold

U.S. hospitals like Mount Sinai and Cleveland Clinic faced EHR downtime, postponing surgeries and diverting ambulances. UK's NHS trusts couldn't access patient records, echoing COVID-era strains.

Finance and Retail: Trading Halted

NYSE opened late on July 19; Nasdaq recovered faster via cloud backups. Retailers like Starbucks saw POS failures, leading to cash-only operations.

Government and Emergency Services

Australian police lost access to databases; 911 centers in the U.S. faltered. Salt Lake City Fire Department resorted to pen and paper.

Corporate Responses and Accountability

CrowdStrike's Actions: CEO George Kurtz issued a transparent apology on X (formerly Twitter) within hours, confirming no breach. Engineers published remediation scripts by 5:00 UTC July 20. A customer call on July 22 detailed systemic fixes, including enhanced validation and canary deployments.

Microsoft's Role: Satya Nadella tweeted support for recovery efforts. Azure unaffected, but Windows users bore the brunt. Microsoft urged driver signing improvements.

Critics noted CrowdStrike's rapid scaling post-2023 MOVEit breach fame led to deployment haste. Stock plunged 11% on July 19, recovering partially by July 23.

Lessons Learned: Rethinking Endpoint Security

This incident underscores EDR fragility in a hyper-connected world.

1. Testing Rigor: Mandatory multi-stage validation, including chaotic canary releases. 2. Resilience Engineering: Graceful degradation and automated rollbacks. 3. Vendor Diversification: Over-reliance on single vendors like CrowdStrike risky. 4. OS Independence: Cross-platform safeguards to limit monoculture vulnerabilities. 5. Incident Preparedness: More orgs now drilling for "update gone wrong" scenarios.

Regulators may push for audits; EU's DORA framework looms for critical infra.

Future Implications for Tech and Finance

For finance, where milliseconds matter, the outage exposed hybrid cloud-on-prem perils. Banks like JPMorgan, minimally hit via redundancies, touted preparedness.

Cybersecurity stocks dipped; Palo Alto Networks gained as alternatives. Expect "CrowdStrike-proofing" services.

As of July 23, 80% of systems recovered, but scars remain. This review rates CrowdStrike's handling: B-. Swift communication earned points, but prevention lapses deduct heavily.

In conclusion, the July 19 outage isn't just a glitch—it's a manifesto for robust software supply chains. Tech leaders must heed it to avert future apocalypses.