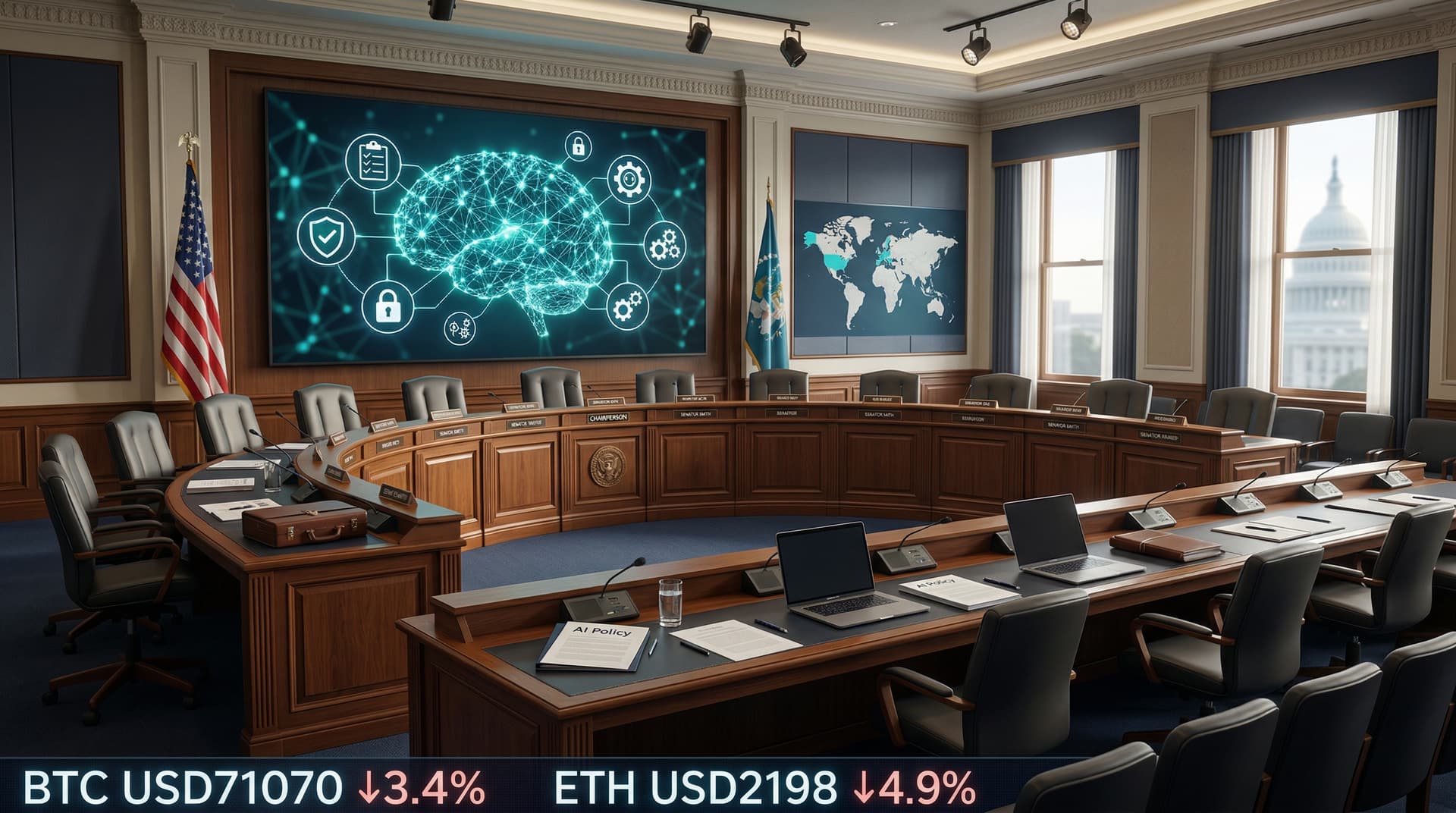

In a move that sends shockwaves through the global tech industry, the European Union's Artificial Intelligence Act (AI Act) was formally published in the Official Journal of the European Union on July 12, 2024. This publication triggers the countdown to its enforcement, positioning the EU as the first major jurisdiction to enact a comprehensive, horizontal regulatory framework for artificial intelligence. As of today's date, July 21, 2024, the 28-member bloc is gearing up for a phased rollout that could redefine how AI systems are developed, deployed, and monitored worldwide.

The Road to Publication

The AI Act's journey began in April 2021 when the European Commission proposed it as part of its broader digital strategy. After intense negotiations among the European Parliament, Council, and Commission, a political agreement was reached in December 2023, followed by formal adoption in May 2024. The July 12 publication means the Act enters into force 20 days later, on August 2, 2024—though most provisions won't apply immediately.

This risk-based regulation classifies AI systems into four categories:

- Unacceptable risk: Banned outright, including manipulative subliminal techniques, social scoring by governments, real-time biometric identification in public spaces (with exceptions), and exploitative practices like emotion recognition in workplaces or schools.

- High risk: Subject to stringent requirements, such as risk assessments, data governance, transparency, human oversight, and conformity assessments. This covers AI in critical areas like education, employment, biometrics, critical infrastructure, and product safety.

- Limited risk: Requires transparency, e.g., users must be informed when interacting with chatbots or deepfakes.

- Minimal risk: No obligations, allowing free use for video games or spam filters.

Fines for violations mirror GDPR's severity: up to €35 million or 7% of global annual turnover for unacceptable risk breaches, €15 million or 3% for other violations, and €7.5 million or 1% for lesser infractions.

Phased Implementation Timeline

The Act's rollout is designed to give stakeholders time to adapt:

| Timeline from Aug 2, 2024 | Provisions | |---------------------------|-------------| | 6 months (Feb 2025) | Bans on unacceptable-risk AI | | 9 months (May 2025) | Codes of practice for general-purpose AI | | 12 months (Aug 2025) | Rules for general-purpose AI models (e.g., ChatGPT) | | 24 months (Aug 2026) | Most high-risk AI obligations | | 36 months (Aug 2027) | High-risk AI in product safety |

By 2027, the EU aims to have a fully operational ecosystem with designated national authorities, a European AI Office, and AI regulatory sandboxes for innovation testing.

Industry Reactions and Challenges

Tech giants have mixed responses. OpenAI's Sam Altman praised the EU's leadership in a recent interview, noting it sets a "global floor" for AI safety. Google and Microsoft have invested in compliance teams, while Meta open-sourced its Llama models under the Act's transparency rules. Startups, however, worry about compliance costs—estimated at €10,000-€2 million per high-risk system—potentially stifling innovation in smaller firms.

Critics argue the Act's broad definitions, like "general-purpose AI models" (GPAI) with systemic risk (e.g., models trained with >10^25 FLOPs), could ensnare frontier models from US labs. GPAI providers must evaluate risks, report incidents, and ensure cybersecurity. The EU's extraterritorial reach means non-EU firms deploying AI in Europe must comply.

Enforcement poses hurdles: 27 national bodies plus the AI Office must coordinate, and technical standards from bodies like CEN/CENELEC are still evolving. Borderline cases, such as medical AI overlapping with existing regs, add complexity.

Global Implications

The AI Act isn't just a European affair. It influences the US Executive Order on AI (Oct 2023), which echoes risk management, and China's Interim Measures (2024). Countries like Brazil, Canada, and Singapore are drafting similar laws, potentially creating a patchwork of regs. US policymakers debate a lighter-touch approach via NIST frameworks, fearing overregulation hampers competitiveness—America hosts 70% of top AI talent and firms.

For finance, AI in credit scoring or trading falls under high-risk if affecting rights. Investors eye opportunities in compliance tools: startups like LegalSifter and Harmonic.ai are booming.

Balancing Innovation and Safety

Proponents hail the Act as a bulwark against AI harms—deepfakes eroding elections, biased hiring tools, or autonomous weapons. A 2024 Eurobarometer survey showed 80% of Europeans want AI rules. Yet, figures like Elon Musk warn of overreach, arguing agility is key in the AI race.

The EU counters with innovation provisions: regulatory sandboxes, testing exemptions, and support for SMEs via the AI Pact—a voluntary pre-enforcement initiative with 500+ signatories already.

Looking Ahead

As the world hurtles toward AGI, the EU AI Act stands as a lighthouse. On July 12, its ink dried in the Official Journal; now, the real work begins. Will it foster trustworthy AI or become a bureaucratic quagmire? Tech leaders from Silicon Valley to Shenzhen are watching closely. By 2025, expect a flurry of conformity declarations, audits, and perhaps court challenges. For now, the message is clear: AI's wild west days are numbered.

CSN News will track developments as the August 2 force date approaches.

(Word count: 912)