By [Your Name], Senior Tech Journalist | December 22, 2024

In a bold move that's sending shockwaves through the AI industry, Google DeepMind has officially unveiled Gemini 2.0, its next-generation family of foundation models designed to push the boundaries of artificial intelligence. Announced on December 11, 2024, via a detailed blog post from CEO Sundar Pichai and DeepMind leaders, Gemini 2.0 marks a significant leap forward in multimodal capabilities, reasoning, and agentic AI. This isn't just an incremental update—it's a foundational redesign aimed at making AI more intuitive, efficient, and actionable in real-world scenarios.

A New Architecture for the Multimodal Future

At the heart of Gemini 2.0 is its native multimodality, meaning the models process and reason across multiple data types—text, images, audio, video, and code—seamlessly from the ground up. Unlike previous models that bolted on modalities as afterthoughts, Gemini 2.0's architecture is built for this era of diverse inputs. Google claims this results in emergent capabilities, such as understanding video content in context with overlaid text or generating code from visual diagrams.

The initial release focuses on Gemini 2.0 Flash (experimental), a low-latency model optimized for high-throughput tasks. Developers can access it immediately via the Gemini API, Google AI Studio, and Vertex AI. Priced competitively at $0.10 per million input tokens and $0.40 per million output tokens, it's designed for scalable deployment. Full Gemini 2.0 Pro and Gemini 2.0 Ultra are slated for early 2025, promising even greater intelligence.

A standout feature is the Thinking Mode in Gemini 2.0 Flash Experimental, which allows the model to deliberate step-by-step before responding, akin to OpenAI's o1 series but extended to multimodal inputs. This mode boosts performance on complex reasoning tasks by 15-20% in internal tests.

Benchmark-Beating Performance

Google didn't hold back on the numbers. Gemini 2.0 Flash crushes benchmarks across the board:

| Benchmark | Gemini 2.0 Flash Score | Top Competitor | |-----------|-------------------------|---------------| | GPQA (Diamond) | 84.0% | o1-preview: 78.0% | | AIME 2024 (Math) | 90.2% | o1-preview: 74.3% | | LiveCodeBench (Coding) | 70.4% | o1-preview: 65.9% | | MMMU (Multimodal) | 81.7% | GPT-4o: 69.0% | | VideoMME (Video) | 84.8% | GPT-4V: 73.0% |

These results position Gemini 2.0 as a leader in math, science, coding, and multimodal understanding. Notably, it handles 2 million token contexts—twice the capacity of many rivals—enabling analysis of entire books, long videos, or massive codebases in one go.

In agentic evaluations like WebArena, Gemini 2.0 Flash achieves 19.1% success on real-world web tasks, outperforming prior models by a wide margin. This hints at its potential for building autonomous agents that interact with apps, browsers, and APIs.

Revolutionizing Applications

Gemini 2.0 isn't confined to chat interfaces; it's engineered for agentic AI. Google showcased prototypes like:

- Project Mariner: A browser-based agent that navigates websites, fills forms, and performs research.

- Gemini Diffusion: Lightweight on-device models for image/video generation.

- Audio/Video Understanding: Real-time transcription, summarization, and question-answering from live streams.

For developers, integrations with Google AI Studio and Vertex AI make it easy to build custom agents. Enterprise users on Vertex AI get managed endpoints with built-in safety filters. Consumer access comes soon via the Gemini app, with features like advanced video analysis for YouTube creators.

Sundar Pichai emphasized in the announcement: "Gemini 2.0 is our most intelligent model family yet, helping people learn faster, create more, and achieve more. It's a step toward AI that acts in the real world."

Demis Hassabis, CEO of Google DeepMind, added: "We've rethought the model architecture for multimodality and long-context reasoning, unlocking new possibilities in science, creativity, and productivity."

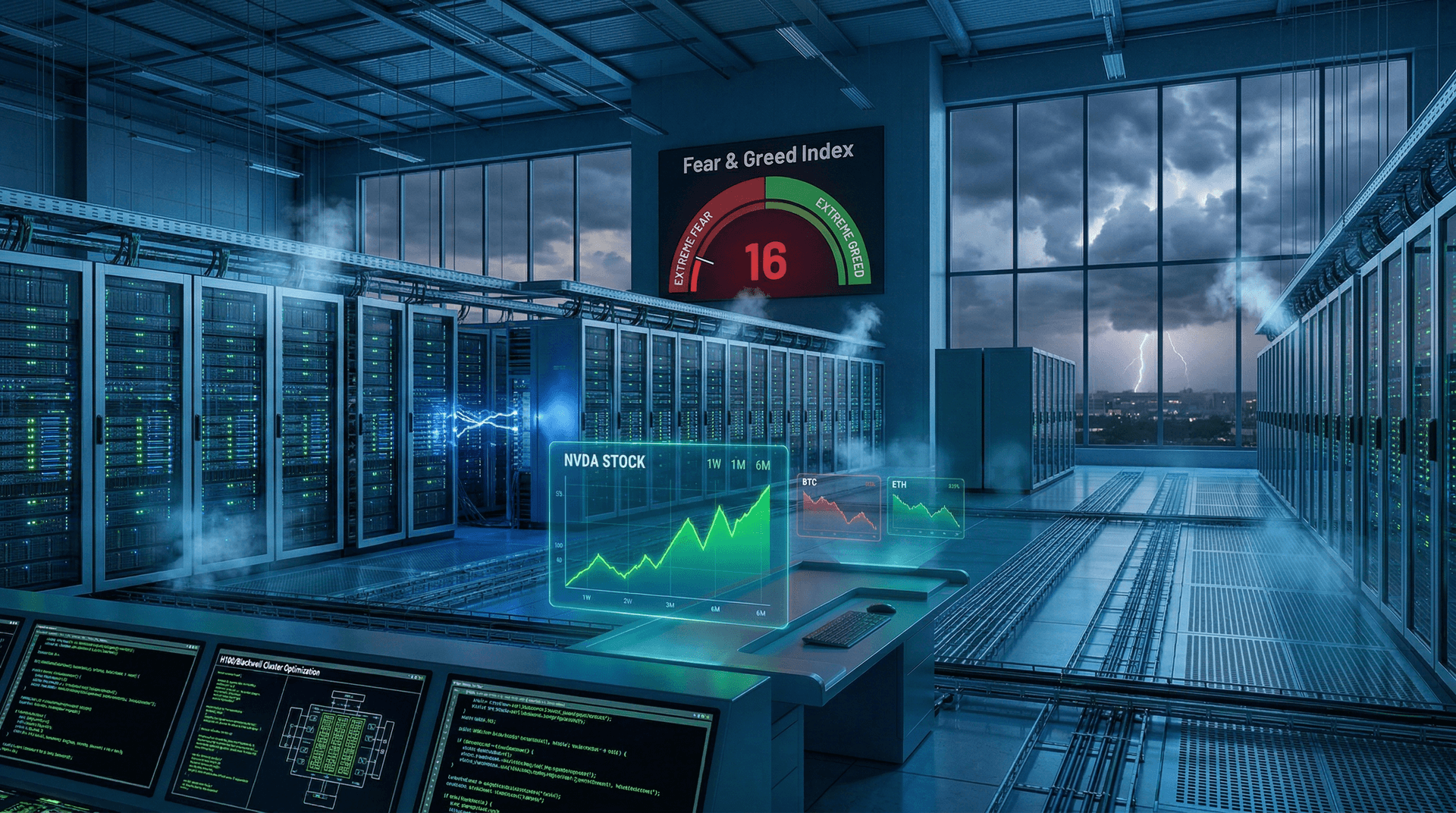

Competitive Landscape and Implications

This launch comes amid fierce rivalry. OpenAI's o1-pro (rolled out December 5, 2024) excels in reasoning but lags in multimodality. Anthropic's Claude 3.5 Sonnet leads in coding, while Meta's Llama 3.1 offers open-source alternatives. xAI's Grok-2 pushes boundaries in humor and efficiency, but Gemini 2.0's ecosystem—tied to Google's vast data and cloud—gives it an edge.

Critics note Google's history of hype, but early API users report tangible gains. Safety remains paramount: Gemini 2.0 incorporates SynthID watermarking for generated content and red-teaming for agent behaviors.

Looking ahead, Gemini 2.0 could accelerate AI agents in 2025, from personal assistants to scientific researchers. Integration with Android and Workspace promises everyday utility, while Vertex AI positions Google against AWS Bedrock and Azure AI.

Challenges and Ethical Considerations

No AI leap is without hurdles. Energy demands for training (estimated at 10x Gemini 1.5) raise sustainability questions. Google pledges carbon-neutral operations, but scaling inference globally will test this.

Hallucinations persist, though reduced by 30% via self-fact-checking. Agent autonomy introduces risks like unintended actions, prompting new safeguards like human-in-the-loop approvals.

Regulators watch closely; the EU AI Act's high-risk classifications loom. Google advocates responsible scaling, aligning with its AI Principles.

The Road Ahead

Gemini 2.0 Flash is live now, with Pro/Ultra imminent. Expect rapid iterations: Google plans monthly updates. Open-weight variants via Hugging Face could democratize access.

As Pichai put it, "We're just getting started." Gemini 2.0 isn't the endgame but a pivotal chapter in AI's evolution, blending human-like reasoning with machine-scale perception.

For developers, dive into the Gemini API docs. For the rest of us, the future feels a little smarter today.

Word count: 1028