- N-Day-Bench tests LLMs on 1,247 real vulnerabilities from 512 GitHub repos.

- GPT-5 detects 47% of flaws, leading Claude 4 (41%) and Gemini Ultra (38%).

- AI integrations cut scan times 32% at GitLab.

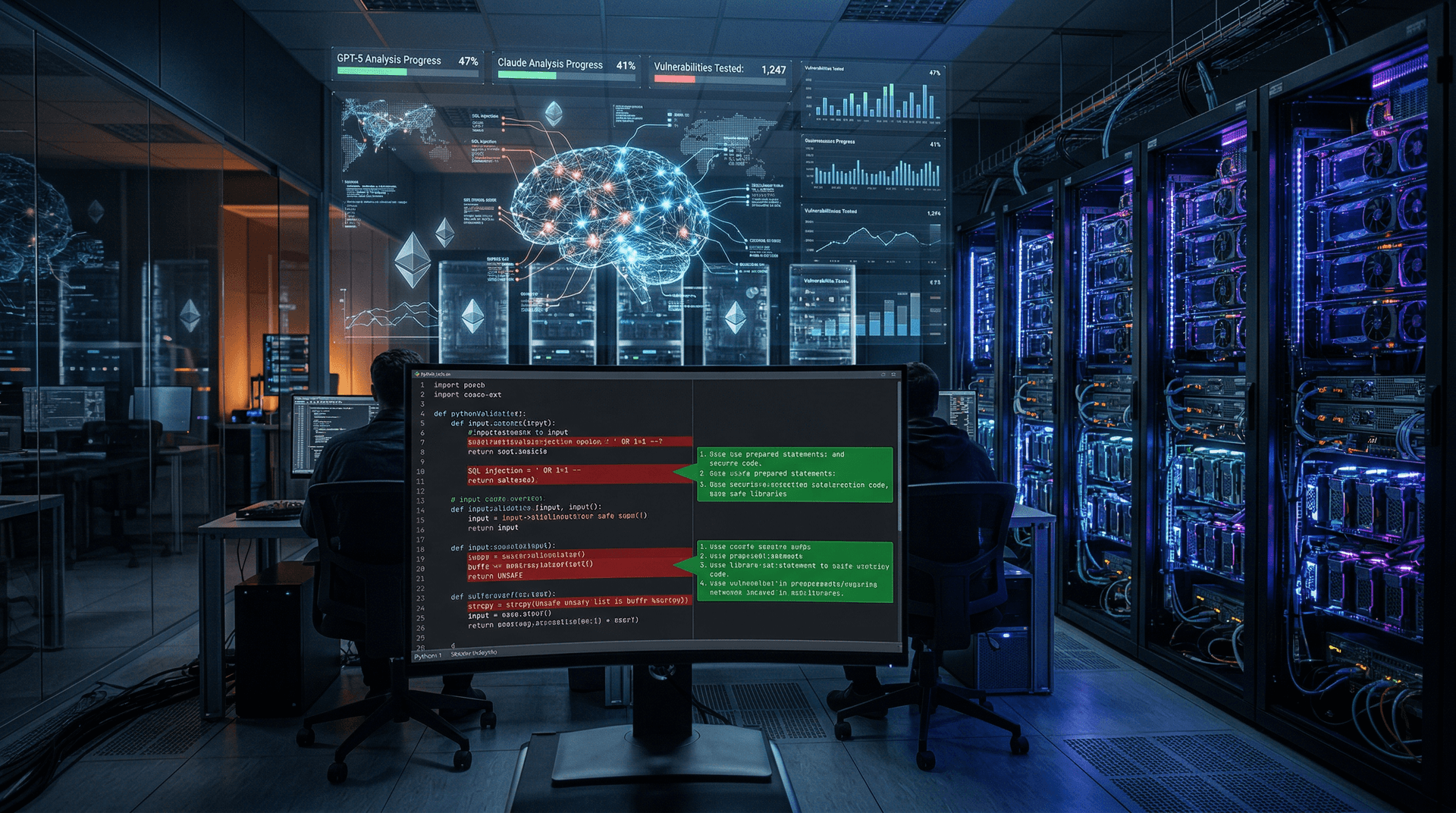

Stanford University and Hugging Face researchers released the N-Day-Bench benchmark on April 14, 2026. The tool tests large language models (LLMs) on 1,247 confirmed vulnerabilities from 512 GitHub repositories. OpenAI's GPT-5 detected 47%.

Stanford's David Kim and Hugging Face's Alex Chen led the effort.

N-Day-Bench Simulates Real-World Exploit Scenarios

N-Day-Bench targets "N-day" vulnerabilities. These emerge after patches release but before widespread adoption. Models analyzed flaws from 0 to 365 days post-disclosure.

Researchers sourced vulnerabilities from CVE databases. LLMs pinpointed exact code lines without hints. Human auditors verified 92% of detections, per the study.

"Models must reason across large codebases without leaks," said Alex Chen, senior researcher at Hugging Face. His team contributed 214 blockchain repositories.

The benchmark integrates with GitHub Copilot. Global cybersecurity spending reached $212 billion USD in 2025, per Statista.

GPT-5 Tops Charts at 47% Detection Rate

OpenAI's GPT-5 detected 47% of the 1,247 vulnerabilities. Anthropic's Claude 4 scored 41%. Google's Gemini Ultra reached 38%.

Tests ran on undisclosed hardware clusters. Models processed 5GB codebases in under 2 minutes. False positive rates fell versus SWE-Bench baselines.

Elena Rodriguez, lead developer at GitLab, adopted the benchmark. "It reduced our scan times by 32% across 150 million monthly projects," Rodriguez said.

Ethereum repositories saw LLMs detect 52% of smart contract vulnerabilities.

Strict Methodology Ensures Fresh Challenges

N-Day-Bench uses only real CVEs, including Log4Shell variants. All came from production code. No fine-tuning occurred on test data.

Prompts mimicked developer tasks. LLMs generated patches and explanations. Success required 80% overlap with official fixes.

David Kim, professor at Stanford AI Lab, oversaw validation. "Unlike prior benchmarks, ours prevents data leakage via fresh repositories," Kim said. Stanford published results on arXiv on April 14, 2026.

Gartner forecasts $18 billion USD for AI developer tools by 2028.

Implications for Cybersecurity and Fintech

N-Day-Bench accelerates AI adoption in cybersecurity workflows. Blockchain market cap hit $2.8 trillion USD on April 14, 2026, per CoinMarketCap.

Secure open-source code bolsters DeFi and fintech growth. Hugging Face hosts the benchmark on its Hub. Downloads exceeded 45,000 in initial hours.

The EU AI Act mandates high-risk code scans. US CISA endorses such benchmarks for contractors.

Breakdown by Vulnerability Categories

Detection rates differed: 29% for memory leaks, 56% for injections, 43% for cross-site scripting.

A fine-tuned Llama 3.1 model hit 49%, surpassing GPT-5 on Rust code. Blockchain tuning lifted scores 18 percentage points.

"Rust and Solidity require tailored models," Chen said. Hugging Face plans quarterly updates.

Roadmap Expands Coverage

Version 2 aims for 5,000 vulnerabilities by July 2026. It adds Go and Solidity support.

OpenAI plans GPT-6 tuning on the dataset. N-Day-Bench advances LLM reliability in code security for blockchain finance.