In one of the most dramatic developments in the AI industry this year, OpenAI's non-profit board of directors announced on November 17, 2023, the removal of co-founder and CEO Sam Altman. The decision, which cited Altman's "lack of candor in communications to the board," has sent shockwaves through Silicon Valley and beyond, raising questions about the governance of one of the world's most influential AI companies.

The Announcement and Immediate Fallout

The news broke via a terse blog post on OpenAI's website late Friday evening. "Mr. Altman’s departure follows a deliberative review process, which concluded that he was not consistently candid in his communications with the board," the statement read. The board appointed Chief Technology Officer Mira Murati as interim CEO, tasking her with managing day-to-day operations while they search for a permanent replacement.

The ouster wasn't isolated. OpenAI President Greg Brockman, a key figure in the company's technical leadership and Altman's close ally, announced his resignation from the board in solidarity. Brockman, who had been with OpenAI since its inception in 2015, posted on X (formerly Twitter): "This is not what I intended when I accepted the board role. I love OpenAI and will continue to support the company in any way I can."

By Saturday morning, the situation escalated. Reports emerged that over 700 of OpenAI's approximately 770 employees had signed an open letter to the board, threatening to resign en masse and join Microsoft, OpenAI's largest investor and cloud computing partner. The letter demanded the reinstatement of Altman and Brockman, warning that the company's mission to ensure artificial general intelligence (AGI) benefits humanity was at risk under the current board.

Background on OpenAI's Unique Structure

To understand this upheaval, one must grasp OpenAI's unconventional governance model. Founded in 2015 as a non-profit to develop AGI safely, it transitioned to a "capped-profit" structure in 2019, allowing a for-profit arm to attract investments while the non-profit board retained oversight. Microsoft has poured over $13 billion into OpenAI since 2019, securing rights to commercialize its technologies like GPT models powering ChatGPT.

Tensions reportedly brewed for months. Sources familiar with the matter told Reuters that the board had grown concerned about Altman's aggressive push toward commercialization, potentially conflicting with the non-profit's safety-first mandate. Recent developments, including the rollout of GPT-4o-mini and custom GPTs via ChatGPT Plus, highlighted OpenAI's shift toward consumer products and revenue generation—$1.6 billion annualized recurring revenue as of mid-2023.

Altman himself downplayed personal ambitions in a podcast appearance just weeks prior, emphasizing safety guardrails. However, internal debates over rapid scaling versus cautionary pauses intensified, echoing broader AI ethics debates post the AI safety summit in the UK last week.

Microsoft's Pivotal Role

Microsoft, holding a 49% stake in OpenAI's for-profit entity, found itself thrust into the crisis. CEO Satya Nadella confirmed on Saturday that Altman would join Microsoft in a new role focused on AI, should opportunities arise. "We have a long-term partnership with OpenAI and remain committed to our mission," Nadella stated, while noting Microsoft employees at OpenAI would retain their jobs.

By Sunday, November 19, negotiations were underway for Altman's potential return. Bloomberg reported that the board offered Altman the CEO role back with new board seats for Microsoft and the employees, but Altman countered with demands to replace most board members. Ilya Sutskever, OpenAI's chief scientist and board member, appeared conflicted—liking supportive tweets for Altman while attending board meetings.

Industry Reactions and Implications

The tech world erupted. Elon Musk, who co-founded OpenAI before leaving in 2018, tweeted: "+++. Board should resign." Anthropic's Dario Amodei called it "a reminder of why governance matters in AI." Investors watched nervously; OpenAI's valuation soared to $86 billion in recent funding talks.

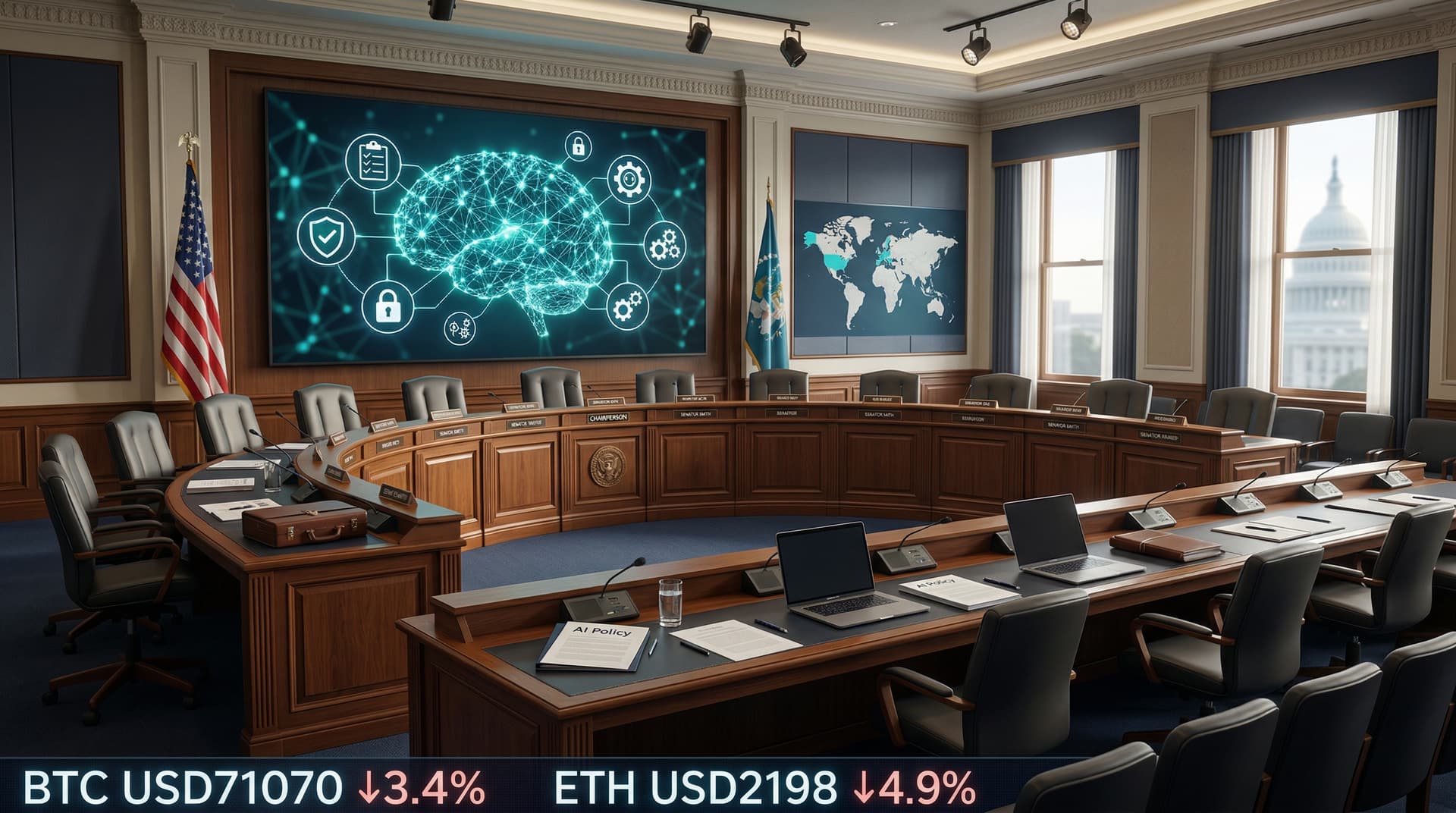

This episode underscores fragility in AI leadership. As companies race toward AGI—capabilities surpassing human intelligence—governance clashes could slow innovation or spur safety lapses. OpenAI's turmoil might embolden regulators; the EU's AI Act finalizes soon, and U.S. lawmakers eye executive orders on AI safety.

For ChatGPT's 100 million weekly users and enterprise clients like PwC and McKinsey, disruptions seem minimal so far. Murati assured continuity, but talent flight risks loom. If Altman returns, it could stabilize OpenAI; if not, fragmentation might benefit rivals like Google DeepMind or xAI.

What Happens Next?

As of November 19, 2023, the board was set to meet again amid mounting pressure. Employee morale is key—OpenAI's edge lies in top talent poached from Google, Meta, and academia. Altman's charisma drove partnerships; his exit tests the company's resilience.

This isn't just corporate drama; it's a pivotal moment for AI's trajectory. Will profit motives eclipse safety, or will boards reclaim control? OpenAI's saga will shape how we build and govern superintelligent systems.

CSN News will continue monitoring developments.

(Word count: 912)