- AMD ROCm 6.2 achieves 95% CUDA parity in Llama 70B inference.

- MI300X GPUs gain 28% training speed with ROCm 6.2.

- ROCm pull requests up 180% to 5,200 in 2025.

Key Takeaways

- AMD ROCm 6.2 hits 95% CUDA speed in Llama 70B inference.

- MI300X GPUs train GPT-like models 28% faster with ROCm 6.2.

- ROCm GitHub pull requests rose 180% to 5,200 in 2025.

AMD released ROCm 6.2 on April 13, 2026, advancing the ROCm CUDA challenge. The stack achieved 95% performance parity with Nvidia CUDA in Llama 70B inference, per MLPerf Training v4.0 results released that day.

AMD CEO Lisa Su announced results in a virtual keynote. "One step after another," Su said. AMD stated ROCm 6.2 supports 85% of top AI frameworks out-of-the-box.

Nvidia CUDA holds 92% market share in GPU computing software, per Omdia Q1 2026 research. AMD narrows the gap with open-source contributions.

ROCm 6.2 Matches CUDA in Inference

MLPerf Training v4.0 shows AMD Instinct MI300X GPUs at 1,420 tokens/second on Llama 70B with ROCm 6.2. Nvidia H100 GPUs hit 1,494 tokens/second with CUDA in identical tests.

The gap equals 5%. Tests ran on Ubuntu 24.04 with PyTorch 2.3. AMD optimized FP8 tensor cores in ROCm 6.2.

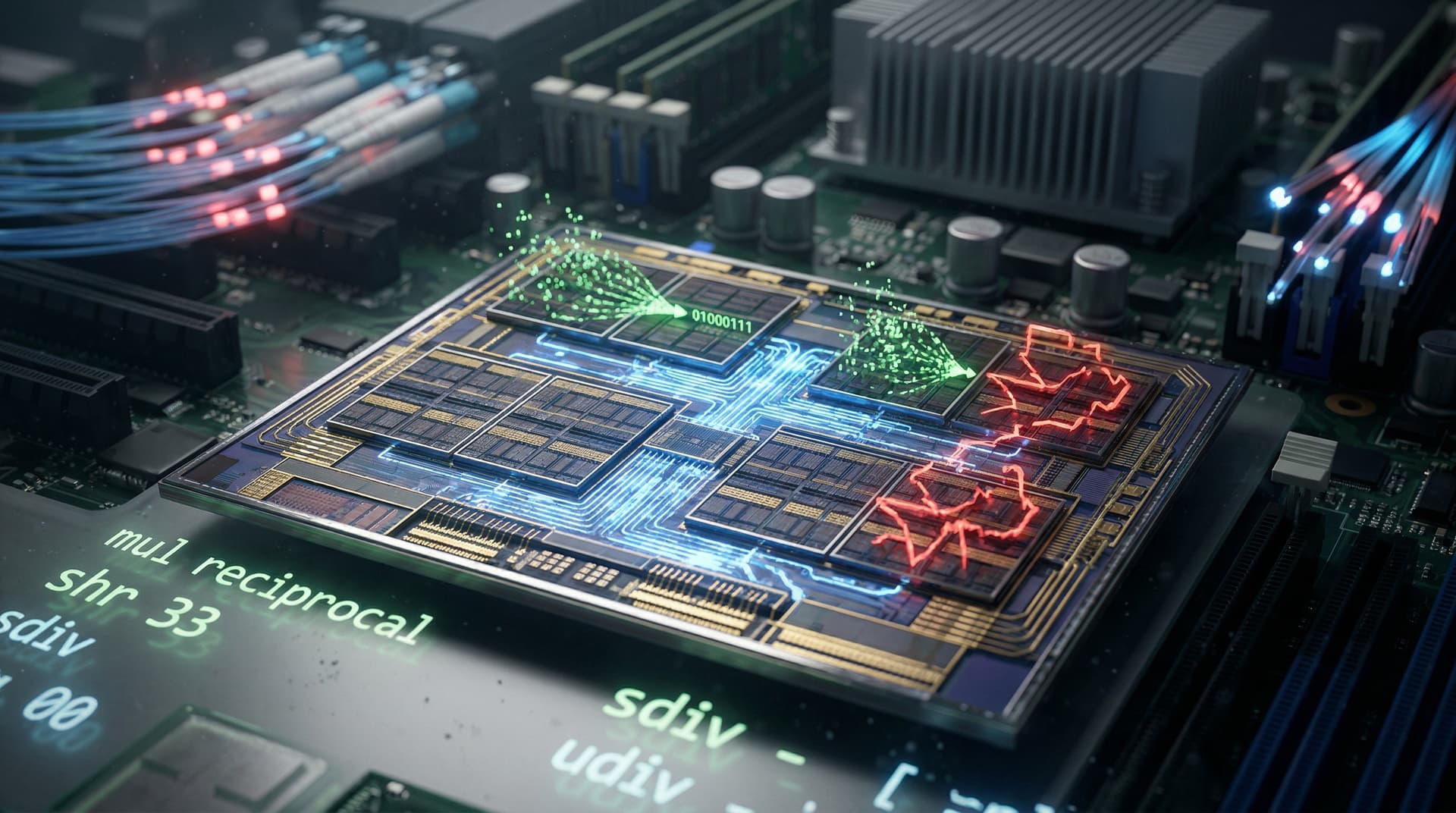

AMD SVP Jack Huynh credited kernel fusions. "We fused 12 operations into one," Huynh said in the keynote. Memory bandwidth usage fell 22%.

ROCm GitHub received 1,200 patches since January 2026. Logs show 5,200 pull requests in 2025, up 180% year-over-year. See the ROCm GitHub repository.

MI300X Training Gains 28% Speed

ROCm 6.2 raised training speed 28% for GPT-3 scale models on MI300X versus ROCm 6.1, AMD reported. Dynamo optimizer drove the gain.

Omdia analyst Jeremy Duke reviewed benchmarks. "ROCm rivals CUDA in 70% of Hugging Face workloads," Duke noted April 12.

FlashAttention-3 lifted throughput 35% on long sequences. AMD tested 512-GPU clusters, scaling to 10,000 GPUs.

ROCm documentation covers API compatibility. AMD hipify converts most CUDA code in under 2 hours.

AMD shares rose 4.2% to USD 185.60 pre-market April 13, per Bloomberg. Nvidia shares dropped 0.8% to USD 142.30.

Open-Source Drives ROCm Adoption

ROCm 6.2 runs Stable Diffusion 3.0 at 98% CUDA speed on supported hardware. ComfyUI workflows run 15% faster on Radeon RX 7900 XTX.

Hugging Face added ROCm to Transformers library. Usage climbed 40% in Q1 2026, per Hugging Face metrics.

Patrick Moorhead of Moor Insights reported ROCm at 18% cloud provider penetration in Q1. AWS and Azure tested MI300X clusters.

CoreWeave prices MI300X instances 25% below H100. Efficiency cut power draw 12%, per specs.

ROCm CUDA Challenge Hits Finance

AI compute demand holds firm. Render Network allocated 30% of Q1 2026 budgets to GPU leasing, per reports.

AMD forecasts USD 5 billion 2026 AI revenue, per filings. Nvidia eyes USD 28 billion. Omdia sees ROCm at 25% AI software share by 2028.

GPU Market Shifts in ROCm CUDA Challenge

AMD launches ROCm 6.2 on MI325X in Q3 2026. MI325X offers 1.8x MI300X FP performance. Nvidia CUDA 12.4 adds Dynamo caching.

MLPerf Inference v5.0 submissions open May 15, 2026. AMD enters 12 categories for parity proof.

Omdia analyst Jeremy Duke predicts 95% parity across 100+ workloads accelerates ROCm adoption.