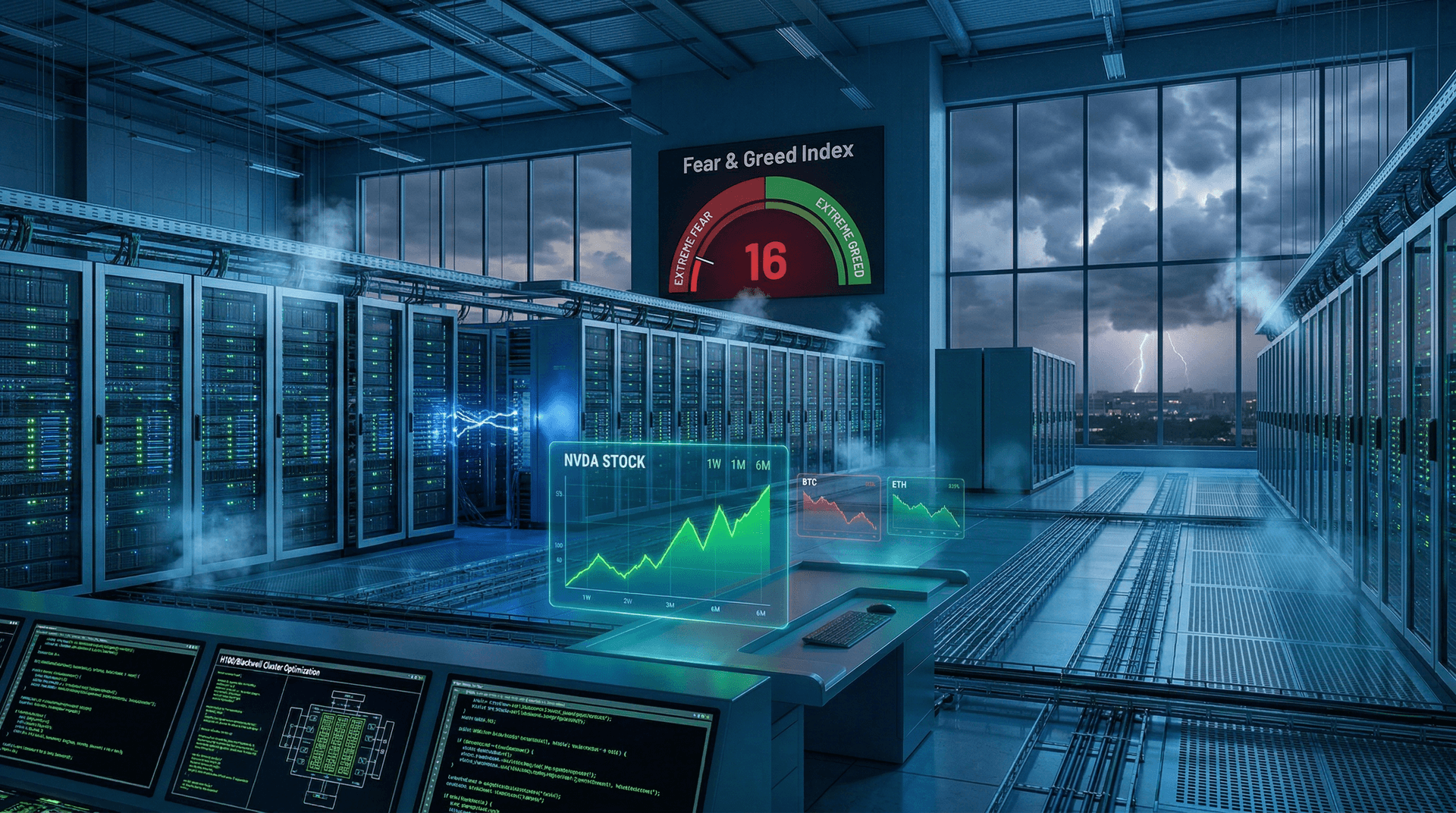

On August 5, 2024, Elon Musk's xAI dropped a bombshell in the AI world by unveiling Colossus, billed as the world's most powerful AI training cluster to date. Housing an astonishing 100,000 Nvidia H100 GPUs, this behemoth was constructed in a mere 122 days at a former Electrolux factory in Memphis, Tennessee. The announcement, shared via Musk's X platform, underscores xAI's aggressive push to rival industry giants like OpenAI, Google, and Meta in the high-stakes race for artificial general intelligence (AGI).

Record-Breaking Build and Scale

What makes Colossus truly remarkable isn't just its size but the blistering pace of its assembly. Partnering with Nvidia, Supermicro, and the Tennessee Valley Authority, xAI transformed an idle factory into a cutting-edge data center capable of delivering exaflops of compute power. Each H100 GPU, Nvidia's flagship for AI workloads, packs 80GB of HBM3 memory and tensor cores optimized for transformer models—the backbone of large language models like Grok.

"Colossus is online and training," Musk tweeted, hinting at immediate utilization. The cluster's liquid-cooled design ensures efficiency, with plans to expand to 200,000 GPUs soon and potentially 1 million Nvidia Blackwell GPUs by 2025. This isn't hype; it's a tangible leap, dwarfing public clusters like Meta's 24,000-GPU setup or Oracle's 131,072-GPU behemoth announced earlier this year.

| Cluster | GPUs | Builder | Purpose | |---------|------|---------|---------| | Colossus | 100,000 H100 | xAI | Grok training | | Llama 3 | 24,576 H100 | Meta | Llama models | | OCI | 131,072 H100 equiv. | Oracle | General AI | | xAI Future | 1M Blackwell | xAI | Grok 3+ |

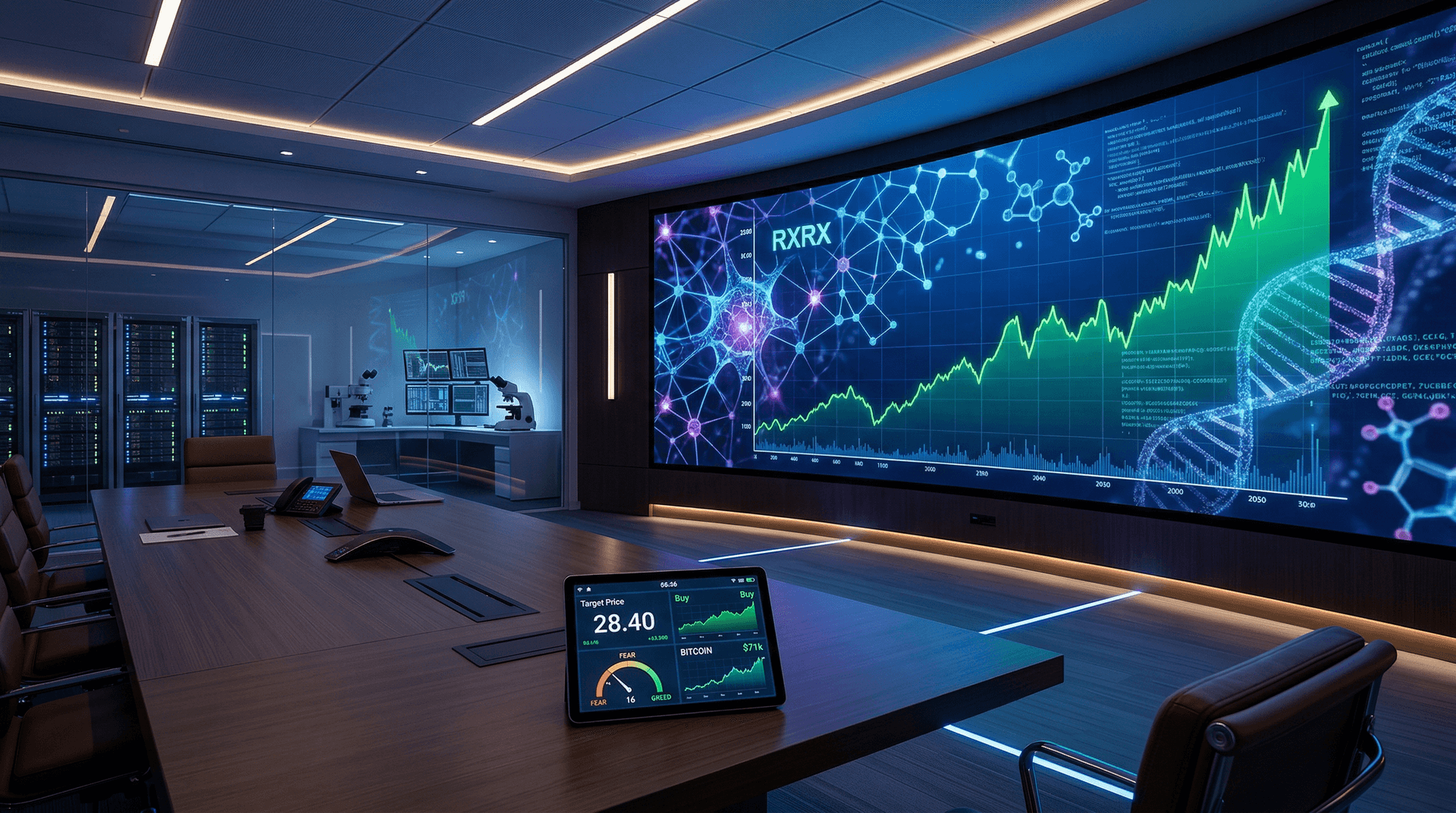

Fueling Grok's Evolution

Colossus is purpose-built for training xAI's Grok family of models. Grok-1, released in March 2024 as a 314 billion parameter Mixture-of-Experts (MoE) model, was trained on a smaller 20,000-GPU cluster. Now, with Colossus, xAI eyes Grok 2—a preview slated for August's end—and Grok 3 by December, both promising multimodal capabilities rivaling GPT-4o and Claude 3.5 Sonnet.

Musk has positioned Grok as a "maximum truth-seeking" AI, contrasting it with what he calls "woke" competitors. Integrated into X (formerly Twitter), Grok leverages real-time data from the platform's 500 million users, giving it an edge in current events and unfiltered responses. Colossus will supercharge this, enabling models with trillions of parameters trained on diverse, high-quality datasets.

The AI Arms Race Heats Up

This launch comes amid escalating compute wars. Hyperscalers like Microsoft (with OpenAI) and Google hoard Nvidia chips, facing shortages that have driven H100 prices to $40,000+ per unit. xAI's feat highlights supply chain wizardry—Musk credited Nvidia CEO Jensen Huang for priority allocation, a nod to Tesla's longstanding partnership.

Critics question the energy demands: Colossus guzzles 150MW initially, rivaling small cities. xAI mitigates with renewables and efficient cooling, but sustainability remains a flashpoint. Economically, the $6 billion Series B funding round in May 2024 (valuing xAI at $24B) bankrolled this, attracting investors like Andreessen Horowitz and Sequoia.

xAI isn't alone. Anthropic's Claude models train on AWS Trainium, while China's DeepSeek pushes boundaries with custom ASICs. Yet Colossus sets a new bar for single-site clusters, potentially accelerating open-weight models if xAI shares outputs like Grok-1's weights.

Broader Implications for AI Development

For developers and researchers, Colossus signals democratization—or centralization?—of mega-scale training. Smaller labs struggle with GPU access, but xAI's speed (122 days vs. years for peers) could inspire modular designs. Expect ripple effects: Nvidia stock surged post-announcement, reinforcing its AI moat.

Musk envisions Colossus as step one toward AGI. "Reality is the ultimate judge," he tweeted, emphasizing uncensored training data. As Grok 2 nears, benchmarks will test if compute alone delivers breakthroughs, or if data quality and architecture reign supreme.

Challenges Ahead

Scaling isn't frictionless. Interconnect bottlenecks (using Nvidia's InfiniBand) and software stacks like xAI's custom Kubernetes must handle petabytes of data. Fault tolerance at 100k nodes is Herculean, but xAI's Tesla-honed expertise in Dojo supercomputers bodes well.

Geopolitically, U.S. export controls on H100s to China amplify xAI's domestic edge. Memphis, with cheap power and incentives, emerges as an AI hub, boosting local jobs.

Looking Forward

Colossus isn't a finish line; it's a launchpad. With expansions planned, xAI aims to outpace rivals by 2025. For the AI ecosystem, this means faster iteration cycles, bolder models, and intensified competition. As Musk quipped, "The Colossus hath awoken."

In the pantheon of AI milestones—from GPT-3's 175B parameters to today's exascale clusters—Colossus stands tall. Watch this space: Grok 2's debut could redefine what's possible.

(Word count: 912)